Dr. Fabian Plum

Postdoctoral Researcher

Adresse

Forschungszentrum Jülich GmbH

Wilhelm-Johnen-Straße

52428 Jülich

Institute for Advanced Simulation (IAS)

Zivile Sicherheitsforschung (IAS-7)

Gebäude 09.7 / Raum 212

Research Profile

Dr. Fabian Plum

I am a multidisciplinary researcher specialising in computer vision, AI-driven analysis of animal and human movement, and robotics. My research blends deep learning, 3D computer vision, and biomechanical modelling to understand complex interactions in natural and laboratory environments across species.

Research Topics & Methods:

- 3D motion analysis and biomechanical modelling

- Computer vision for behaviour and pose estimation

- Parametric Mesh Models

- AI/ML for data-sparse environments

- Synthetic data generation for computer vision applications

- Structure from Motion, point-cloud reconstruction, (macro) photogrammetry, and Gaussian splatting

- Deep Reinforcement Learning-driven interaction and routing policy distillation

- Open-source research software for behaviour analysis

Selected Contributions:

replicAnt: a pipeline for generating annotated images of animals in complex environments using Unreal Engine, 2023 (Nature Communications doi.org/10.1038/s41467-023-42898-9)

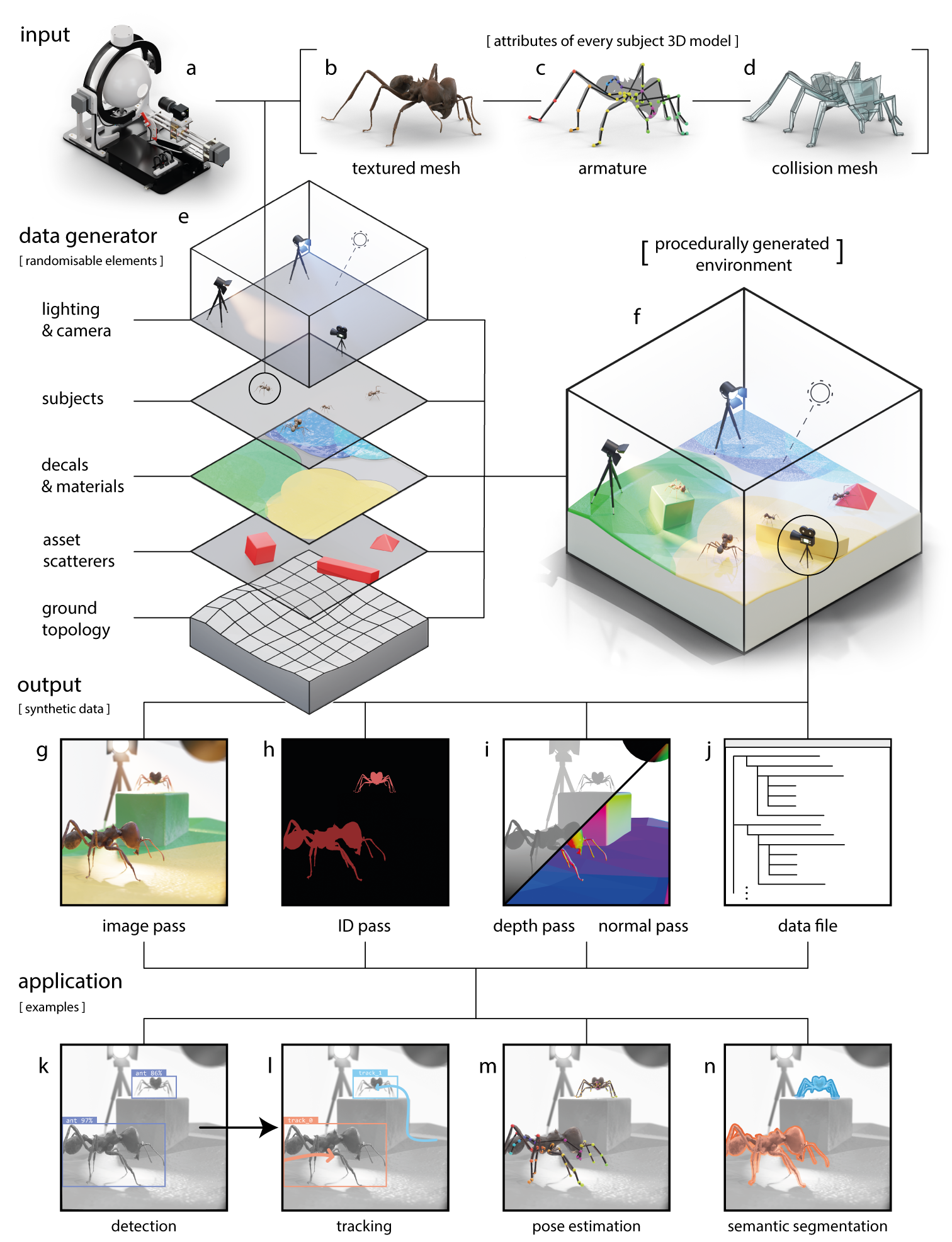

replicAnt produces procedurally generated and richly annotated image samples from 3D models of animals. These images and annotations constitute synthetic data, which can be used in a wide range of computer vision applications. (a) The input to the replicAnt pipeline are digital 3D models; we generated high-fidelity models with the open-source photogrammetry platform – scAnt. Each model comprises a (b) textured mesh, (c) an armature which provides control over animal pose, and (d) a low-polygonal collision mesh to control the interaction of the model with objects in its environment. (e) 3D models are placed within scenes procedurally generated with the free software Unreal Engine 5. (f) Every scene consists of the same core elements, each with configurable randomisation routines to maximise variability in the generated data. 3D assets are scattered on a ground topology with complex topology; layered materials, decals, and light sources provide significant variability for the generated scenes. From each scene, we generate (g) image, (h) ID, (i) depth, and normal passes, accompanied by (j) an annotation data text file which contains information on image contents. Deep learning-based computer vision applications which can be informed by the synthetic data generated by replicAnt include (k) detection, (l) tracking, (m) 2D and 3D pose estimation, and (n) semantic segmentation.

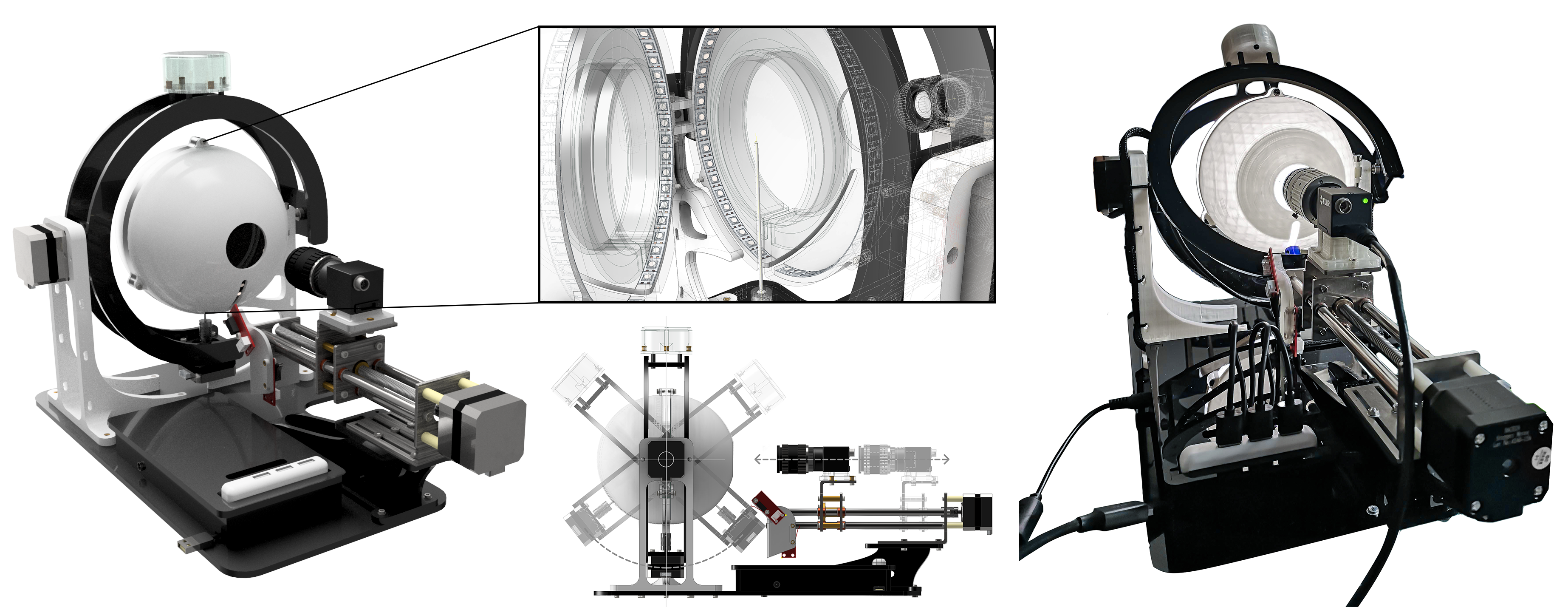

scAnt: an open-source platform for the creation of 3D models of arthropods (and other small objects, 2021) (PeerJ 9:e11155)

scAnt is an open-source, low-cost macro 3D scanner, designed to automate the creation of digital 3D models of insects of various sizes in full colour. scAnt provides example configurations for the scanning process, as well as scripts for stacking and masking of images to prepare them for the photogrammetry software of your choice. Some examples of models generated with scAnt can be found on http://bit.ly/ScAnt-3D as well as on our Sketchfab Collection.

OmniTrax: a deep learning-driven multi-animal tracking and pose-estimation add-on for Blender, 2024 (10.21105/joss.05549)

![]()

Automated multi animal tracking example (trained on synthetic data)

OmniTrax is an open-source Blender add-on designed for deep learning-driven multi-animal tracking and pose estimation. It leverages recent advancements in deep-learning-based detection (YOLOv3, YOLOv4) and computationally inexpensive buffer-and-recover tracking techniques. OmniTrax integrates with Blender's internal motion tracking pipeline, making it an excellent tool for annotating and analyzing large video files containing numerous freely moving subjects. Additionally, it integrates DeepLabCut-Live for marker-less pose estimation on arbitrary numbers of animals, using both the DeepLabCut Model Zooand custom-trained detector and pose estimator networks.

![]() SMILify: Species-agnostic parametric mesh recovery (in preparation, github.SMILify)

SMILify: Species-agnostic parametric mesh recovery (in preparation, github.SMILify)

SMILify is acomputational framework for species-agnostic parametric mesh recovery from (multi-camera) RGB video. The system enables researchers to configure custom parametric models for any species, supporting both animal and human behavioural research. Through deep learning-based inference, SMILify predicts 3D mesh parameters (pose, shape, camera) from monocular as well as synchronised multi-view imagery, enabling accurate 3D reconstruction without species-specific constraints.