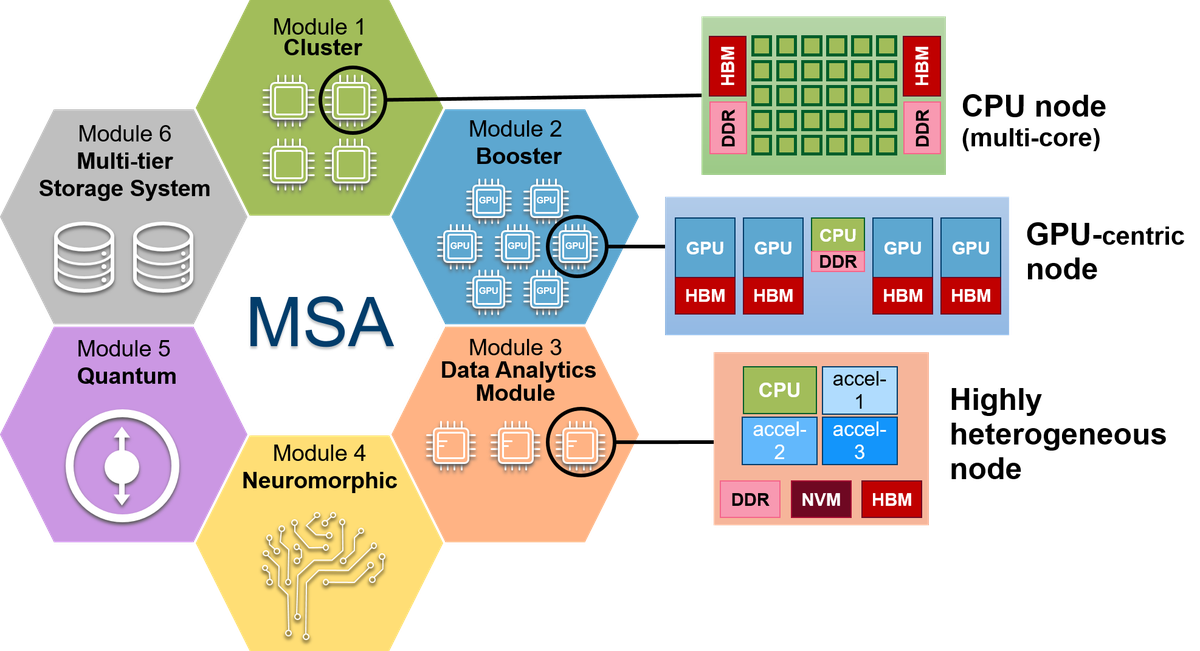

Modular Supercomputing Architecture (MSA)

The Modular Supercomputing Architecture (MSA) is a novel system-level design to integrate heterogeneous resources and match the requirements of a wide spectrum of application fields, ranging from computationally intensive high-scaling simulation codes to data-intensive artificial intelligence workflows

The MSA has been developed within the DEEP project series.

Within the MSA, several compute modules of potentially large size – each one tuned to best match the needs of a certain class of algorithms – are connected to each other at the system level to create a single heterogeneous system (see Figure). Power consumption is kept at bay by only using power-hungry, general purpose processing components where these are absolutely necessary (in the cluster module). Energy efficient, highly parallel technologies (e.g. GPUs) are scaled up and employed for high-throughput and capability-computing codes (in the booster module). Disruptive technologies (e.g., neuromorphic or quantum) can be integrated through dedicated modules.

A common software stack enables users to map the intrinsic application requirements (e.g., their need for different acceleration technologies and varying memory types or capacities) onto the hardware; highly scalable code parts run on the energy efficient booster (a cluster of accelerators), while less scalable code parts profit from single-thread performance of the general purpose cluster. Likewise, new types of hybrid application workflows will be possible by exploiting the capabilities provided by modules implementing disruptive technologies (e.g. quantum computers or neuromorphic devices), all under the umbrella of the common software stack. Optimisations for the hardware and middleware are implemented within the stack, enabling both developers and end-users of production codes to take advantage of the modular system. An unified software stack is key for easing application coupling and improved agility, as more and more workflows require a complex execution pipeline to produce final results.

A federated network connects the module-specific interconnects, while an optimised resource manager enables assembling arbitrary combinations of these resources according to the application workload requirements. This has two important effects: Firstly, each application can run on a near-optimal combination of resources and achieve excellent performance. Secondly, all the resources can be put to good use by combining the set of applications in a complementary way, increasing throughput and efficiency of use for the whole system.