High-Q Club

Highest Scaling Codes on JUQUEEN

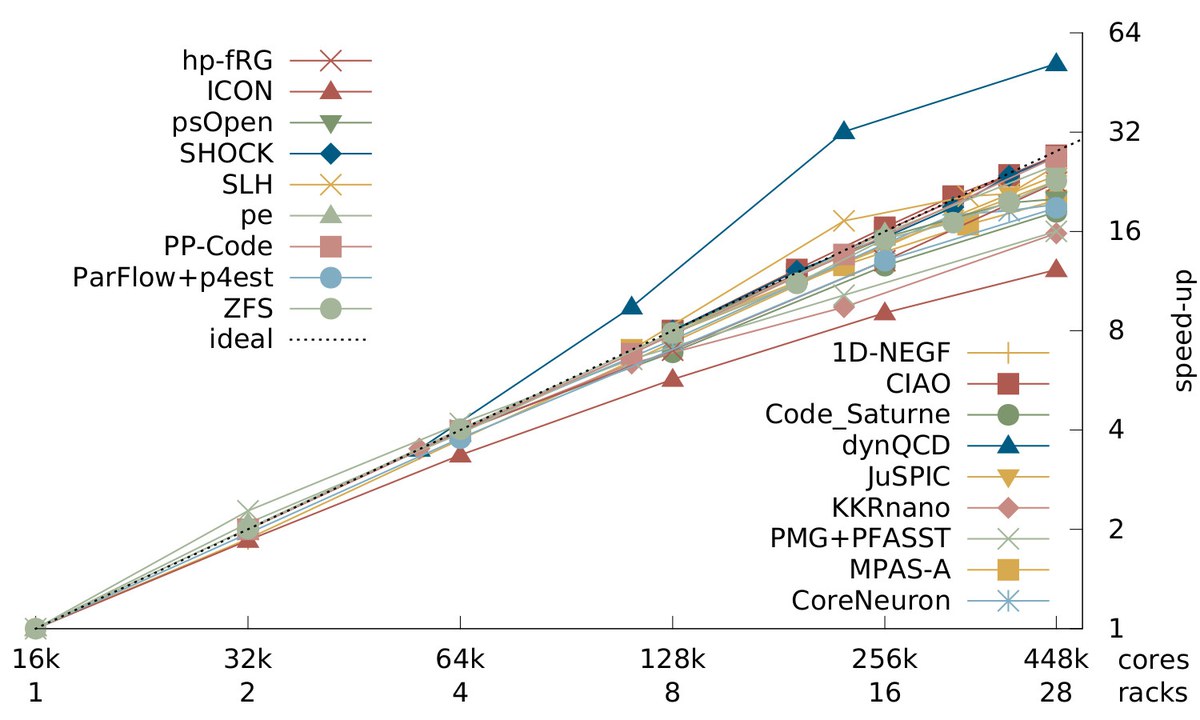

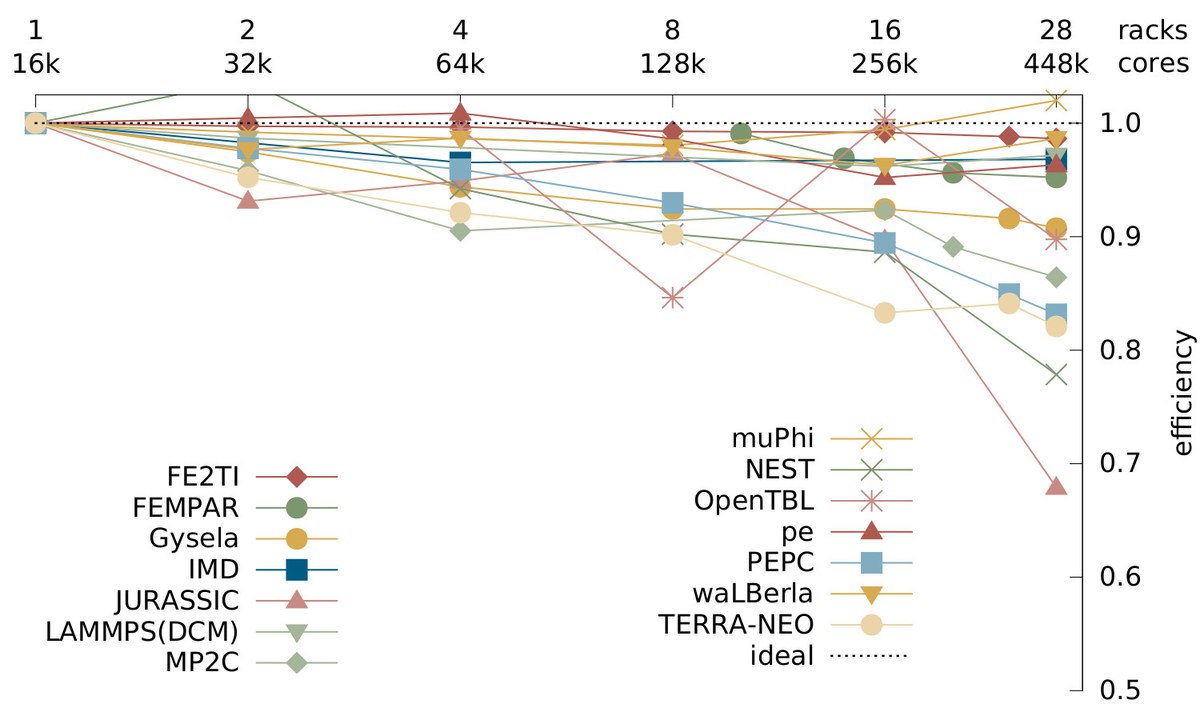

Following up on our JUQUEEN Porting and Scaling or Extreme Scaling Workshops and to promote the idea of exascale capability computing, we established a showcase for codes that at the time could utilise the entire 28-rack BlueGene/Q system at JSC. The goal was to encourage other developers to invest in tuning and scaling their codes and show that they are capable of using all 458,752 cores, aiming at more than 1 million concurrent threads on JUQUEEN.

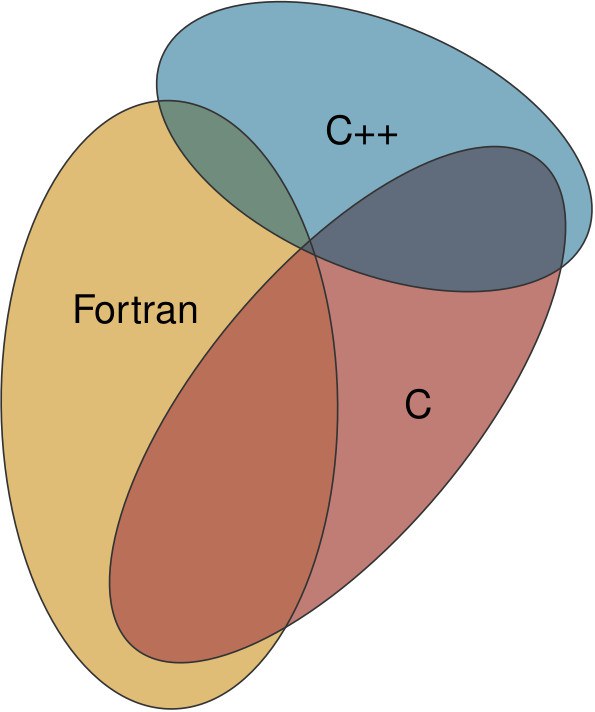

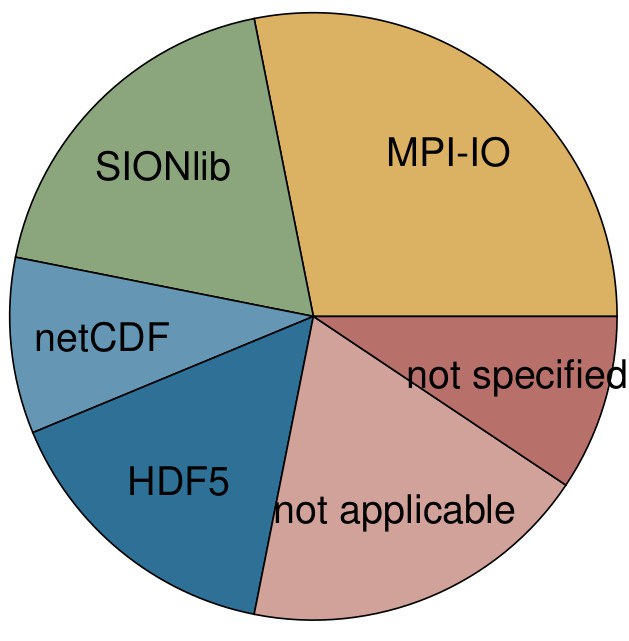

The diverse membership of the High-Q Club shows that it is possible to scale to the complete JUQUEEN using a variety of programming languages and parallelisation models, demonstrating individual approaches to reach that goal. High-Q status marked an important milestone in application development towards future HPC systems that envisage even higher core counts.

Analysing the codes, we found that the application benefits extended beyond the BlueGene/Q architecture to other HPC leadership systems. The lessons learned for JSC and the application developers were more widely applicable and provided insights for expected future exascale applications.

References

- The High-Q Club: Experience with Extreme-scaling Application Codes

- Technical Report from the 2017 Extreme Scaling Workshop

- Extreme-scaling applications en route to exascale

- Technical Report from the 2016 Extreme Scaling Workshop

- Technical Report from the 2015 Extreme Scaling Workshop

- Paving the Road towards Pre-Exascale Supercomputing

- High-Q Club - The highest Scaling codes on JUQUEEN

Codes in the High-Q Club

The following list of codes is kept as a hall-of-fame for the member codes in the High-Q Club. Since with the decommissioning of JUQUEEN in spring 2018 any results the codes achieved on this BlueGene/Q system are somewhat outdated, we refer to the above publications for more details and keep the list short, only indicating thier programming model, programming language and I/O method (if relevant).

A 1D Non-Equilibrium Green's Function framework for transport phenomena.

MPI + OpenMP / C

CIAO

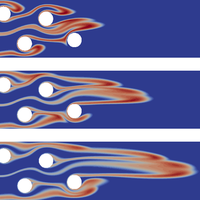

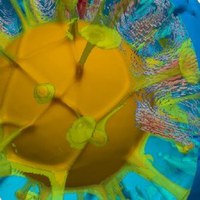

Multiphysics, multiscale Navier-Stokes solver for turbulent reacting flows in complex geometries.

MPI / Fortran / HDF5

An open-source, multiphysics CFD software.

MPI + OpenMP / Fortran + C / MPI-I/O

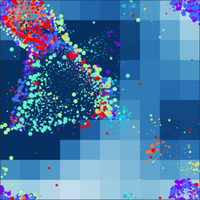

Simulating electrical activity of neuronal networks with morphologically-detailed neurons.

MPI + OpenMP / C + C++ / MPI-I/O

dynQCD

Lattice Quantum Chromodynamics (QCD) with dynamical fermions.

SPI + pthreads / C

A scale-bridging approach incorporating micro-mechanics in macroscopic simulations of multi-phase steels.

MPI + OpenMP / C + C++

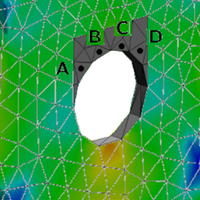

FEMPAR

A framework for the massively parallel FE simulation of multiphysics problems governed by PDEs.

MPI / Fortran

Gysela

A gyrokinetic code from CEA, France, for modelling fusion core plasmas.

MPI + OpenMP + pthreads / Fortran + C / HDF5

A hierarchically parallelised code for renormalisation group calculations.

MPI + OpenMP / C + C++

ICON

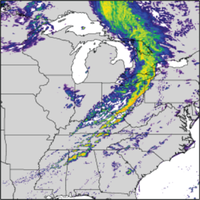

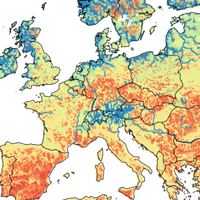

A solver for fully compressible non-hydrostatic equations of motion at very high horizontal resolution.

MPI + OpenMP / Fortran + C / netCDF

A software package for classical molecular dynamics simulations.

MPI / C

JURASSIC

A fast solver for infrared radiative transfer in the Earth's atmosphere.

MPI + OpenMP / C / netCDF

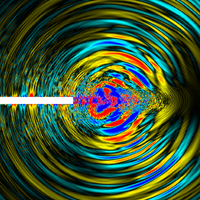

A fully relativistic Particle-in-Cell code for laser-plasma simulations.

MPI + OpenMP / Fortran / MPI-I/O

KKRnano

Korringa-Kohn-Rostoker Green function code for quantum description of nano-materials.

MPI + OpenMP / Fortran / SIONlib

A Dynamic Cutoff Method for a classical molecular dynamics code, the Large-scale Atomic/Molecular Massively Parallel Simulator.

MPI + OpenMP / C++

Fluid simulations using a hybrid representation of solvated particles.

MPI / Fortran / SIONlib

An atmospheric solver for fully compressible non-hydrostatic equations of motion on unstructured Voronoi meshes.

MPI / Fortran + C / SIONlib

μφ (muPhi)

Water flow and solute transport in porous media.

MPI / C++ / SIONlib

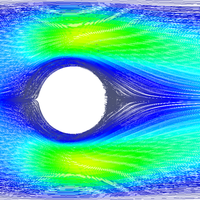

A multicomponent Lattice Boltzmann solver for flow simulations.

MPI + OpenMP / Fortran / MPI-I/O

A simulator for spiking neural network models that focus on the dynamics, size and structure of neural systems.

MPI + OpenMP / C++ / SIONlib

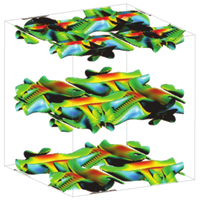

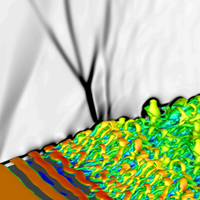

Direct numerical simulation of turbulent flows.

MPI + OpenMP / Fortran / HDF5

An open-source parallel watershed flow model.

MPI / Fortran + C / MPI-I/O

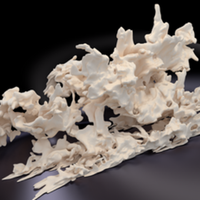

A massively parallel rigid body dynamics framework.

MPI / C++ / MPI-I/O

A particle tree code for solving the N-body problem for Coulomb, gravitational and hydrodynamic systems.

MPI + pthreads / Fortran + C / SIONlib

PMG+PFASST

A space-time parallel solver for systems of ODEs.

MPI + pthreads / Fortran + C

A particle-mesh code for simulating charged and neutral particle dynamics in relativistic and non-relativistic plasmas.

MPI + OpenMP / Fortran / MPI-I/O

psOpen

Direct numerical simulation of fine-scale turbulence.

MPI + OpenMP / Fortran / HDF5

An all Mach number code for fluid dynamics in astrophysics.

MPI + OpenMP / Fortran / MPI-I/O

SHOCK

Direct numerical simulation of compressible flow.

MPI / C / HDF5

A multigrid solver for geophysics applications from the University of Erlangen.

MPI + OpenMP / Fortran + C++

A widely applicable Lattice Boltzmann solver from the University of Erlangen.

MPI + OpenMP / C++ / MPI-I/O

ZFS

A multiphysics framework for compressible and incompressible flow, aeroacoustics, and combustion phenomena.

MPI + OpenMP / C++ / netCDF