A turbo for artificial intelligence (AI)

Computers consume large amounts of energy, as data storage and processing take place in separate components. As an alternative, researchers from Jülich now want to develop economical chips modelled on nature. The technology offers many opportunities, especially for AI applications. The aim is to establish a research and development hub with international attention in the region in order to accelerate the transfer to industry and to support structural change in the Rhenish coal mining region.

In den vergangenen Jahrzehnten sind Computerchips immer leistungsfähiger geworden, die Schaltkreise immer kleiner. Der Energieverbrauch hingegen ist kontinuierlich gestiegen. Denn trotz allem Fortschritt hat sich der grundlegende Aufbau der Rechenmaschinen von Anfang an niemals verändert. Die allermeisten Computer beruhen heute auf der Von-Neumann-Architektur: Das Herz ist der Prozessor. Er führt alle Berechnungen aus. Die dazu nötigen Daten befinden sich aber in einem räumlich getrennten Arbeitsspeicher. Der Austausch von Daten zwischen den beiden Komponenten lässt die Schaltkreise heiß laufen.

Faktor 1000 scheint möglich

A concept for an alternative architecture does exist, explains Stephan Menzel: “It is called computation-in-memory, a design in which calculations are carried out directly in the memory. This makes the communication between the memory and the processor obsolete. And that in turn significantly reduces the computer's energy consumption.” In certain applications, the power requirement could even shrink to around one thousandth.

Together with his colleagues at Jülich’s Peter Grünberg Institute (PGI-7) and at RWTH Aachen University as part of the JARA cooperation, he is researching such economical components. These are not based on conventional transistors, but on so-called memristors (memory + resistor). The characteristics of their switching properties are similar to those of the synapses in the human brain.

For these novel circuits, Stephan Menzel has his sights set on an application that is particularly energy intensive: artificial intelligence (AI), specifically: artificial neural networks. “These algorithms can detect hidden patterns. For example, they identify faces, improve weather forecasts or support doctors in their diagnoses,” says Stephan Menzel. These AI systems learn how to filter out the relevant information from a mountain of data.

To do this, they must first be fed with a large amount of training data. If, for example, the algorithms are to recognise human faces in photos, they must first learn from countless portraits what it is that constitutes a human face. The underlying calculations are so extensive that these programmes usually run on high-performance computers, the architecture of which is better adapted to this task than conventional processors. Still, parallel processing of the data devours a large amount of energy.

Interview with Prof. Dr. Rainer Waser (in German)

In an interview, Prof. Dr. Rainer Waser, Director at Forschungszentrum Jülich's Peter Grünberg Institute, explains how neuromorphic chips with memristive elements can help save energy, and gives insight into global developments.

Play/Download Audio (lenght: 10:56 Min.)

Accelerator for neural networks

In JARA-FIT, Stephan Menzel and his colleague Tobias Ziegler want to give AI computers a boost by means of a special component, making them more economical at the same time. “We have implemented – on a small scale at first – a hardware accelerator that has the potential to compute artificial neural networks faster and more efficiently,” explains Tobias Ziegler.

Artificial neural networks are at the bottom of the majority of AI systems. Their mode of operation follows the way data is processed in the brain. In the human intellectual faculty, the individual nerve cells exchange their impulses via thousands of synapses. When learning, these contact points are specifically strengthened or weakened. In the artificial counterpart, it is “mathematical neurons”, which are also connected to other cells, that do the calculating. The strength of this connection, called weight, also changes as the training data is learned.aten.

The brain as a role model

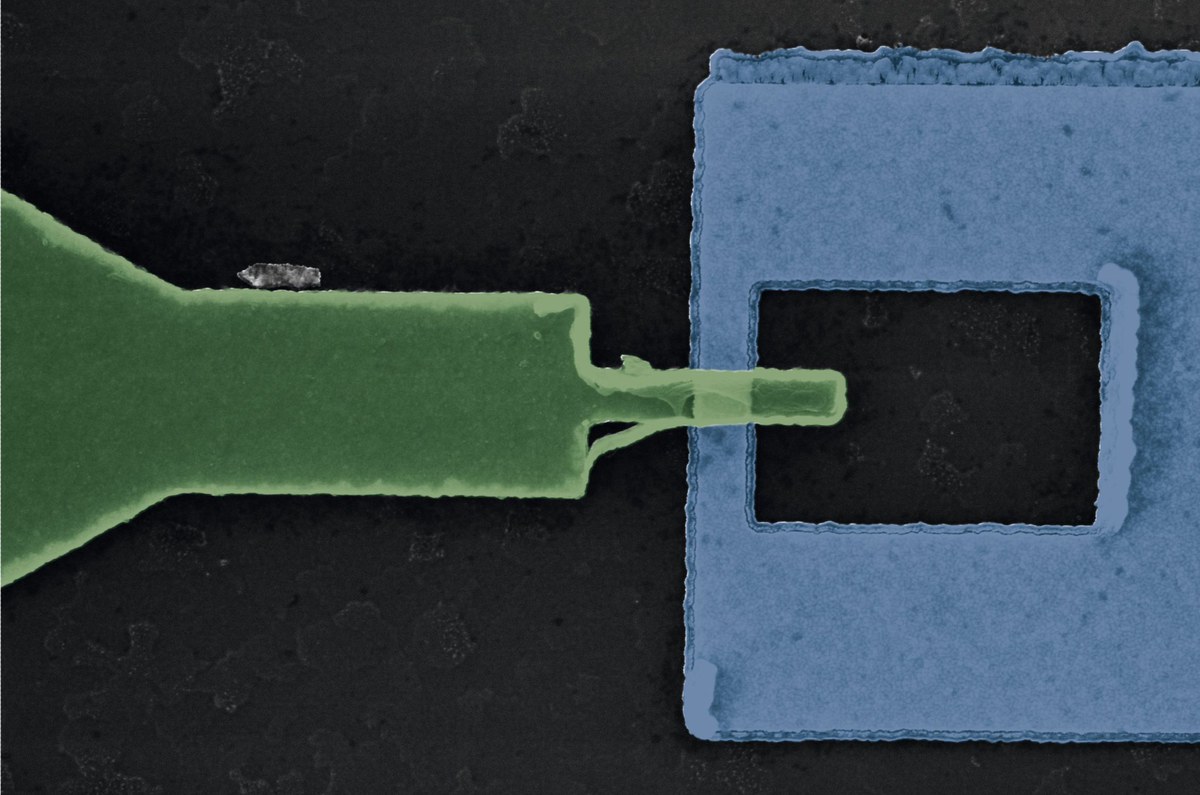

In a conventional computer, this requires a lot of data to be rolled back and forth between the processor and the memory. The “neuromorphic” circuits that Stephan Menzel and his colleagues are developing, on the other hand, can mimic the synapses of neural networks. The calculation is then performed directly within the memory. This is made possible by tiny resistance switches of which the circuits consist.

Stephan Menzel: “Here at the institute, we have been working with these components, the memristors, for many years. Their electrical resistance can be switched back and forth between two states – between a high and a low value.”

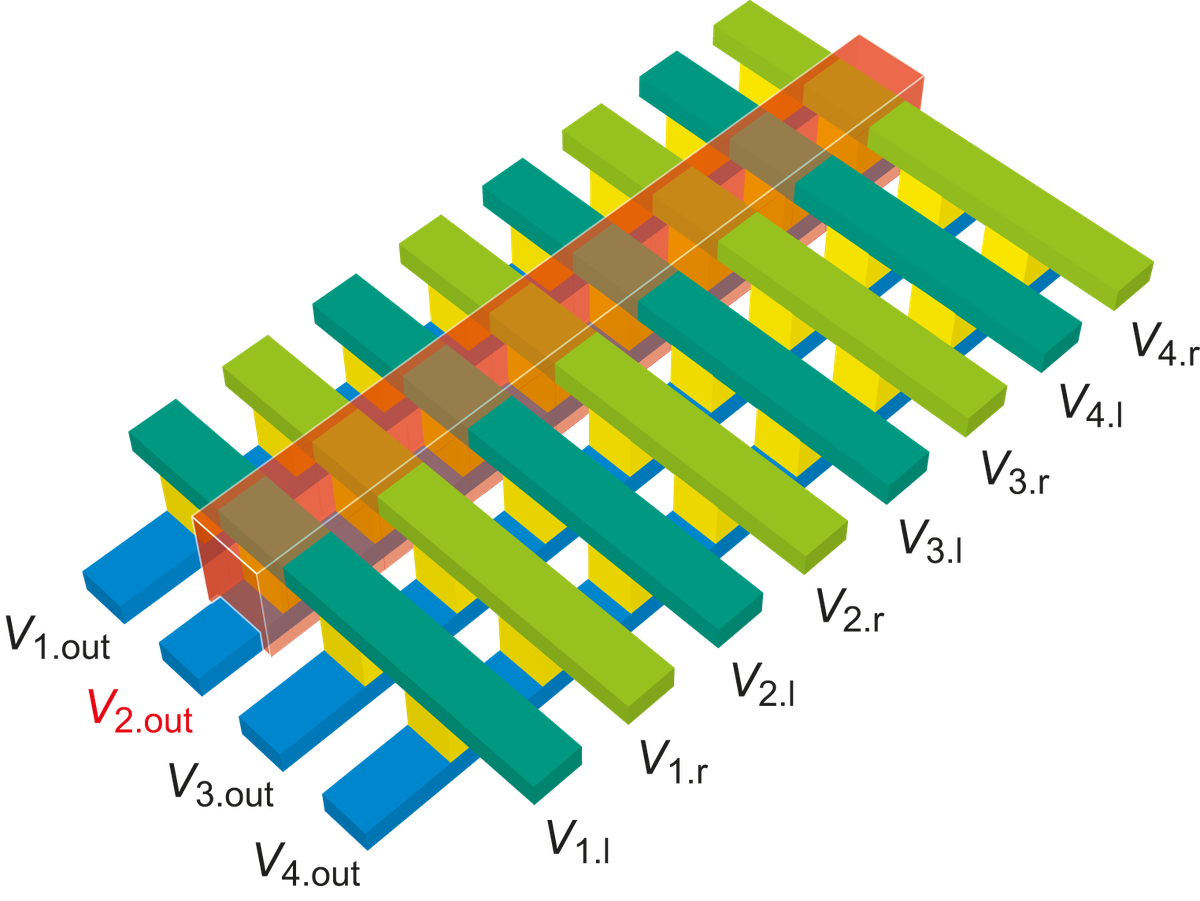

The respective value remains even when the switching voltage is no longer applied. The switches are therefore suitable as non-volatile memory for data. They can do even more, however. Combining several of these switches allows for long lines of memory cells to be built, which are in contact with each other via a common electrode. In this arrangement, computing operations can be applied to stored information directly.

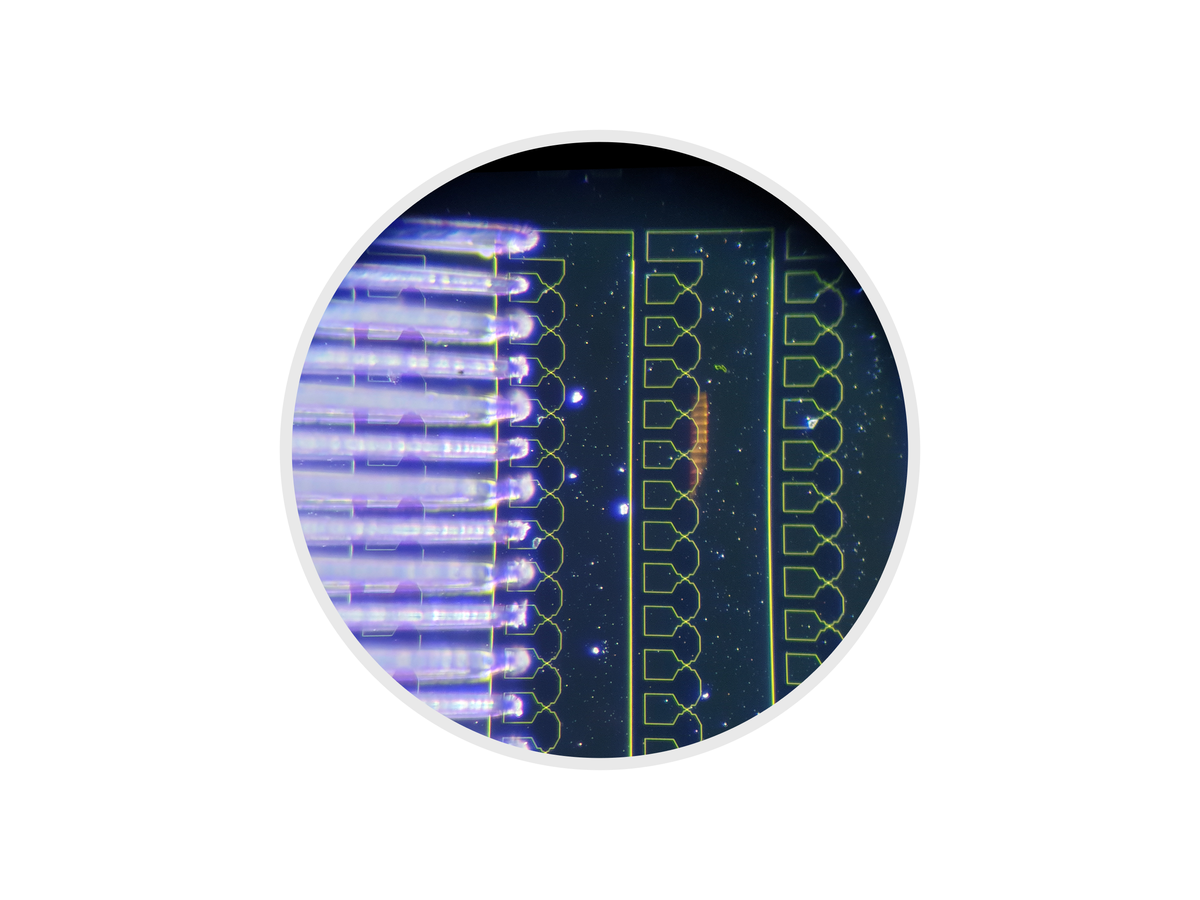

In the NEUROTEC project, the research group at PGI-7 is currently testing the concept in practice for the first time, in order to perform calculations for AI with the switches. “We first stored some weights in this arrangement; a part of the training result, so to speak. Then we coded the data to be calculated into voltage signals and measured the voltage change at the common electrode.” explains Tobias Ziegler.

Complementary connection

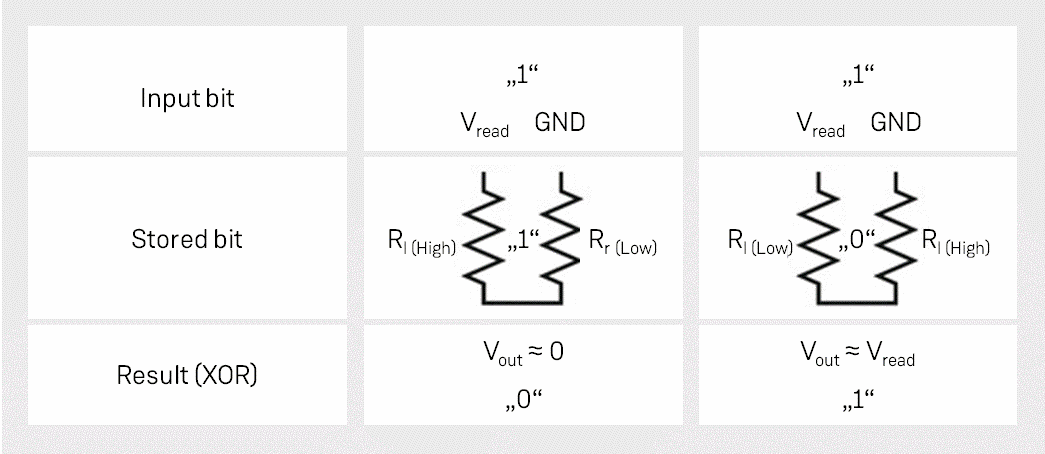

The Jülich researchers are not alone in working on this topic. Companies and research groups all over the world are engaging in the development of neuromorphic circuits based on memristors. The circuit concept pursued by Tobias Ziegler and his colleagues, however, has a special feature compared to the standard design: the memristive components are not controlled individually. Instead, the cells are always connected in complementary pairs.

“The cells are always operated in the exact opposite direction. If one has a low resistance, its counterpart gets a high one. So, all in all, you always have one low and one high resistance value,” explains Stephan Menzel.

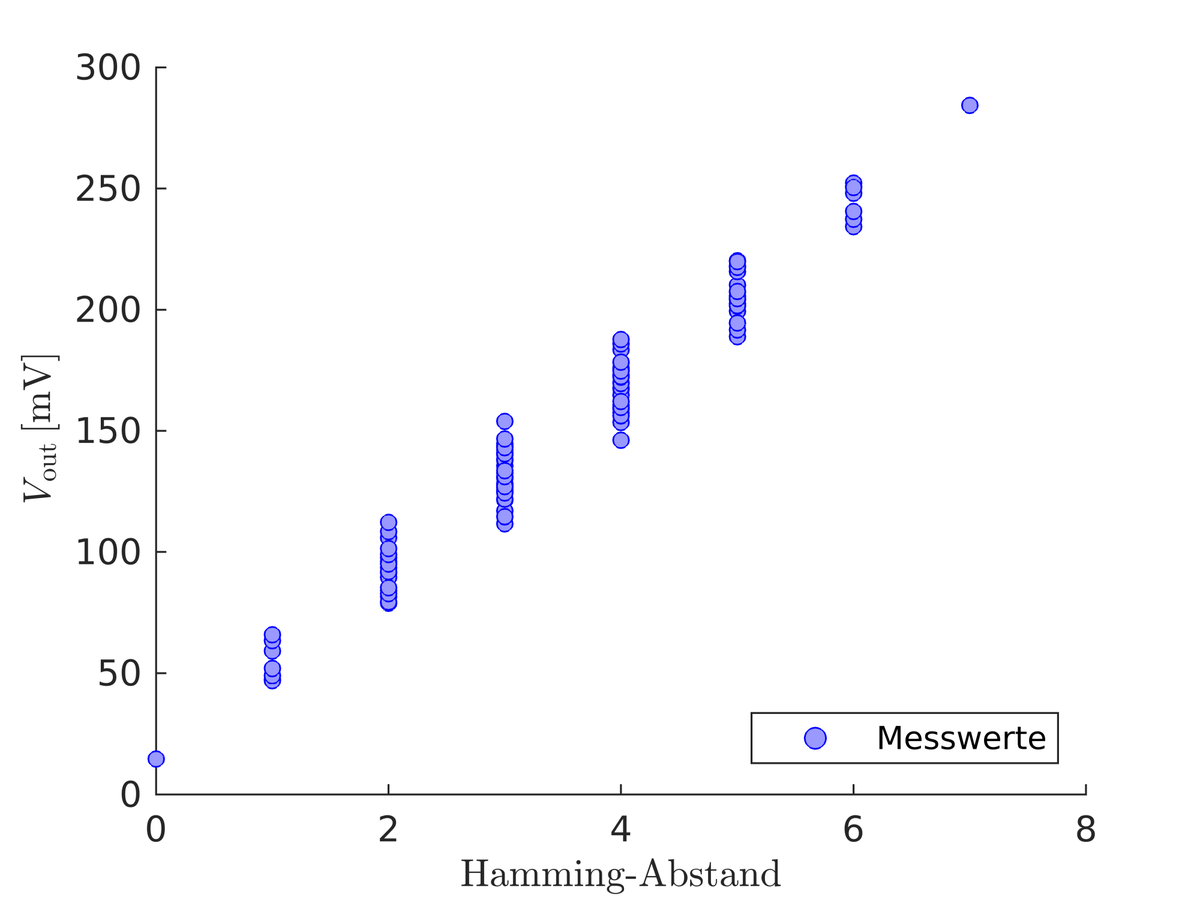

This alternative arrangement has a potentially decisive advantage in practice. This is because in this way, the voltage increases linearly when the computational result increases.

“I think that the linear voltage output contributes significantly to simplifying the periphery,” explains Stephan Menzel. “A linear voltage increase is much easier to handle. Practically every readout electronics is designed for this.” Another figure also shows that this part of electronics should not be neglected: “In other computation-in-memory approaches that other groups have studied, more than 95 per cent of the area and more than 90 per cent of the power account for routing and readout electronics,” Tobias Ziegler adds.

Erster Demonstrator im Frühjahr 2021

The Jülich researchers have already realised a first circuit with 14 cells. They were thus able to prove the feasibility of their concept. In the next step, a demonstrator is to clarify how the concept will perform in practice. A first test chip is to be finished in spring 2021 that will still contain memristive components from a commercial manufacturer.

In the further course of the NEUROTEC project, the memristor specialists from Jülich also want to put chips with their own memristive components to the test. A performance comparison will then show how different circuit designs and materials perform in practice. For this purpose, various memristive devices will be integrated directly on-site at the Helmholtz Nano Facility (HNF) with the help of facilities that were commissioned as part of the NEUROTEC project (see also [LINK ANPASSEN]

It will be interesting to see which concept will ultimately prevail for these neuromorphic chips combining memory and processor. Many experts see this as the key to energy-saving and fast AI hardware of the future.

Arndt Reuning

International location for neuromorphic computers

he work is part of the Federal Ministry of Education and Research’s project NEUROTEC. The aim is to create an internationally visible location for cutting-edge research in the field of memristive circuits for neuromorphic computers at Forschungszentrum Jülich and RWTH Aachen University. The project is funded by the immediate action programme for structural change, as the research is intended to involve industrial partners from the region in order to provide new impulses and strengthen the locations in the Rhineland Region. Coating systems, measurement technology and software from regional manufacturers are used in the project.

Digital End-of-Year Lecture 2020 (in German)

Structural change was also the focus of Jülich’s End-of-Year Lecture 2020. Under the title “New Thinking, New Opportunities: How Research Contributes to Structural Change”, Jülich scientists provided insight into their exciting projects that promote and support the region in structural change.

Original publication:

Tobias Ziegler, Rainer Waser, Dirk J. Wouters, Stephan Menzel

In‐Memory Binary Vector–Matrix Multiplication Based on Complementary Resistive Switches

Advanced Intelligent Systems (2020), DOI: 10.1002/aisy.202000134

Further information:

Press release "Artificial Synapses on Design" of 11 May 2020 [LINK ANPASSEN]

Press release "The Brain as a Model for the Computer Technology of Tomorrow" of 8 January 2020 [LINK ANPASSEN]